Interior Weather

Interior Weather is the first prototype of an helmet that makes you experience and feel a storm triggered by emotions shared on Twitter.

Concept

Twitter, as the other social networks, has changed how we conceive collective trust and how the strategies to position oneself within the social spectrum. It generates an individualization of professional credibility and collective exchanges. It explodes waht was concentrated under the umbrellas of organizations, governments, institutions into unique and independent particules. Every person can build his own reputation, credibility and image without belonging to a group, a business of a media.

This triggers the phenomenon of intensive self promotion. Tweets and statuses focuses on positive and optimistic content shared by the person herself. Enough so that the narcissism personality disorder has recently been withdrawn from the official psychiatry manuels. It is no longer socially awkward to talk about ourselves all the time. Pretension is no longer perceived as a lack of social skills, but has become an asset. Be yourself please, and be the best.

The thing is at the same time we collectively accept self-promotion as a normal behavior, the rate of depression and other mental disorder keeps increasing. Cat walk yourself on Twitter, but keep your dark feelings in the darkness of your mind. It has always been that way. But what has changed, is that in addition to be the one who have to deal with your own personality, you now have to be the one to promote it, no matter how you feel. What you can and how you show it is what you are worth.

How it works

Interior Weather attempts to show how compartmentalized our lives can be on the social networks by putting the dark feelings we would like to ignore forward.

It uses the Twitter API to connect Processing to 4 different live stream. Each of those live stream trigger an physical assets that translate the emotion into a weather aspect in the helmet.

“I feel alone” tweets trigger a wind sound and a fan;

“I am sad” tweets trigger sound of rain;

“I am angry” tweets trigger thunder sounds;

“I am afraid of” tweets trigger broken glass sounds and an intensive strobe light.

Connecting Twitter to Processing

I used the Twitter 4j library to connect Processing to the Twitter API. To do so, you first need to create yourself a developer account. Once you have it, you create an Twitter “app” that works with the oauth system to secure communication between services and clients.

When you create your “app”, Twitter gives you the following:

OAuth Consumer Key;

OAuth Consumer Secret;

Access Token;

Access Token Secret;

and those:

OAuth Consumer Key;

OAuth Consumer Secret;

Access Token;

Access Token Secret.

You need them to create the handshake between Processing and the Twitter API. Then, you need to create a “Twitter Stream Factory” and a Listener. They goe on and get the tweets from the stream and wait to see how you will se them. To clarify your use of the stream, you have to create queries. You could look for tweets coming from a specific username, datas, etc. It is recommended to have only one query by Twitter Factory.

This means that for Interior Weather, I had to build 4 different Twitter Factories (meaning 4 developer accounts, 4 times the oauth keys details and 4 times the access token details) to have 4 different queries live.

Triggering Storm Sounds

I needed to find way to have Processing triggering sounds for every different tweet query. I started by using the OscP5 Library. It allows Processing to send and receive MIDI message to and from another software. I connected Processing to Ableton Live.

Every time there was a tweet coming from a query, Processing was sending an osc message to Ableton and an .mp3 clip was triggered. There were limitations when it was time to play more then one .mp3 at the same time. I did not find a way to send Processing message from Ableton when the track was done or when the track was already playing. This means that every tweet would play the song from the start causing cuts and bugs. Per example, if there were more than one tweet about anger within 5 seconds, the track would start 5 times instead of playing till the end before it being triggered by another tweet. After 2 days of trying, I discovered I could do it in a better and simpler way using the Minim Library.

The Minim Library is pretty simple. You do not need to connect Processing to an external sound software to play tracks, it transform Processing into a audio player itself. Which is exactly what I needed as I was using .mp3 and was not doing anything live involving visuals effects.

The code is pretty simple. You load the .mp3 in an array the same way you would do for pictures:

import ddf.minim.*;

Minim minim;

AudioPlayer[] track = new AudioPlayer [9];

minim = new Minim(this);

for (int i = 0; i < track.length; i++) {

track [i] = minim.loadFile( i + “.mp3″);

}

Then, when you want to play the tracks you use the .play(); function. Click here is the list of the Minim functions of the AudioPlayer. What is tricky with Minim is that each time Processing plays an .mp3, it expects to play it from where it played last time. You have to .rewind(); the track in the loop so it start from the beginning the next time. My tracks are very short and will be triggered a lot of time in draw, depending on the incoming tweets. So I had to come up with a solution so Processing would know:

if (aloneOn == true) {

ellipse (width/2, height/2, 30, 30);

port.write(‘H’);

if (track[7].position() > track[7].length()-1000) {

aloneOn = false;

track[7].rewind();

}

else if (track[7].isPlaying() ==false) {

track[7].play();

}

}

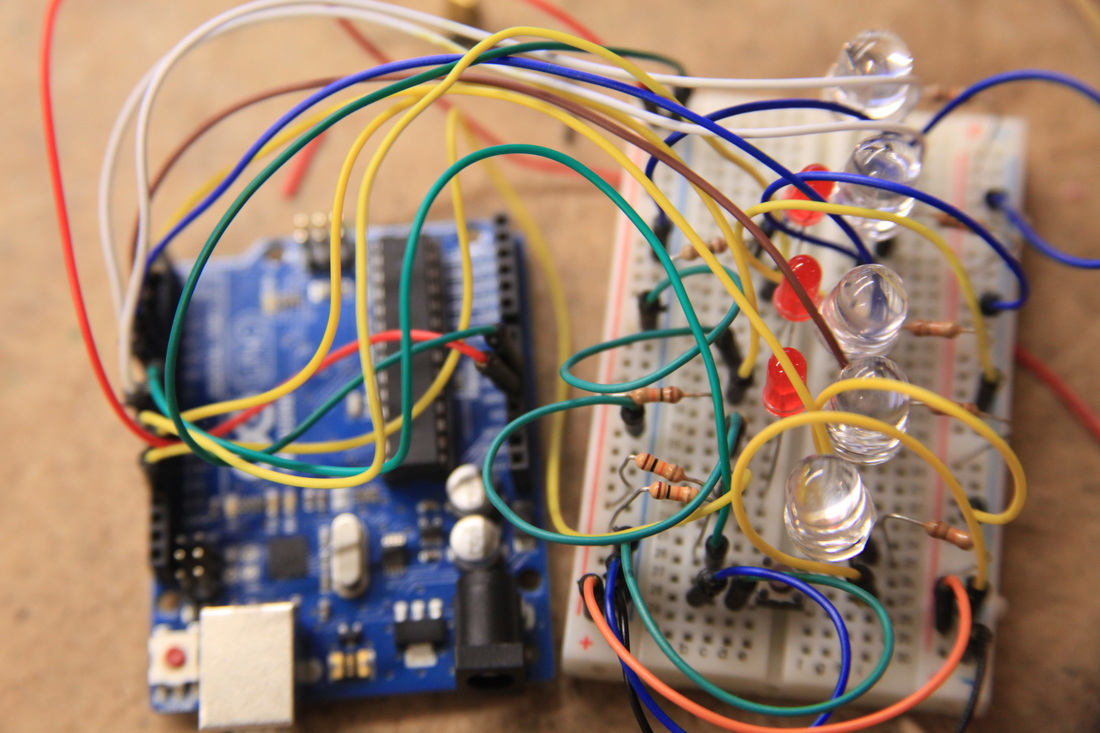

The Physical Interface

The idea with this project was to create an object:

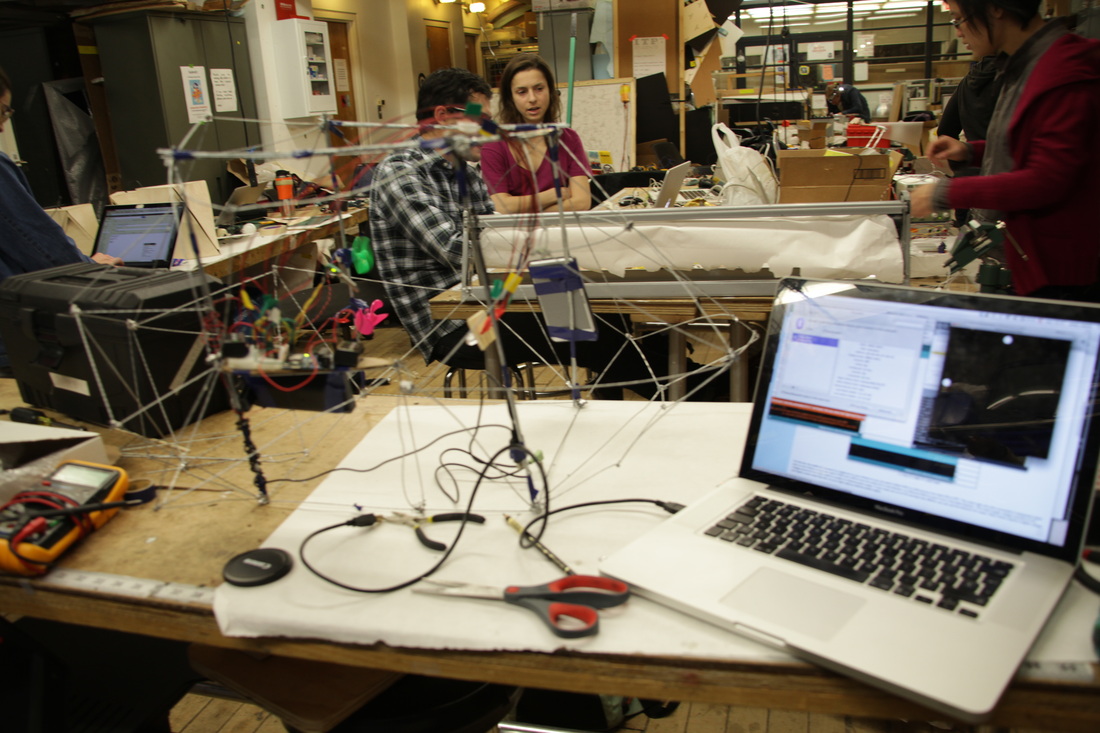

The key work: Prototyping

The best thing I learned during this class, is the idea of prototyping. It took me a long time to accept:

For all those reason, I decided to let it go. To stop forcing me into the perfection path, as it would lead towards disappointment. I needed to accept the process was more important that the project itself.

The Challenges

One of the most challenging things was to deal with a live stream of data. How do you build a coherent experience without knowing what would come up from Twitter? How to build an experience that would be ready for every eventualities. I still have a lot of work to do regarding the sounds. The .mp3 are of 15 seconds. I might have to change the length so it works better.

Another challenge was the delays. There is a short delay in between Twitter and Processing. It takes half a second for Processing to trigger the .mp3 when a tweet is received. There is also a delay in between Processing and Arduino. It takes a little time for Arduino to receive the byte and read it. There is also a delay for the DC motors to start. This means that the motors do not start exactly at the same time as the .mp3 they are supposed to be aligned with.

Twitter, as the other social networks, has changed how we conceive collective trust and how the strategies to position oneself within the social spectrum. It generates an individualization of professional credibility and collective exchanges. It explodes waht was concentrated under the umbrellas of organizations, governments, institutions into unique and independent particules. Every person can build his own reputation, credibility and image without belonging to a group, a business of a media.

This triggers the phenomenon of intensive self promotion. Tweets and statuses focuses on positive and optimistic content shared by the person herself. Enough so that the narcissism personality disorder has recently been withdrawn from the official psychiatry manuels. It is no longer socially awkward to talk about ourselves all the time. Pretension is no longer perceived as a lack of social skills, but has become an asset. Be yourself please, and be the best.

The thing is at the same time we collectively accept self-promotion as a normal behavior, the rate of depression and other mental disorder keeps increasing. Cat walk yourself on Twitter, but keep your dark feelings in the darkness of your mind. It has always been that way. But what has changed, is that in addition to be the one who have to deal with your own personality, you now have to be the one to promote it, no matter how you feel. What you can and how you show it is what you are worth.

How it works

Interior Weather attempts to show how compartmentalized our lives can be on the social networks by putting the dark feelings we would like to ignore forward.

It uses the Twitter API to connect Processing to 4 different live stream. Each of those live stream trigger an physical assets that translate the emotion into a weather aspect in the helmet.

“I feel alone” tweets trigger a wind sound and a fan;

“I am sad” tweets trigger sound of rain;

“I am angry” tweets trigger thunder sounds;

“I am afraid of” tweets trigger broken glass sounds and an intensive strobe light.

Connecting Twitter to Processing

I used the Twitter 4j library to connect Processing to the Twitter API. To do so, you first need to create yourself a developer account. Once you have it, you create an Twitter “app” that works with the oauth system to secure communication between services and clients.

When you create your “app”, Twitter gives you the following:

OAuth Consumer Key;

OAuth Consumer Secret;

Access Token;

Access Token Secret;

and those:

OAuth Consumer Key;

OAuth Consumer Secret;

Access Token;

Access Token Secret.

You need them to create the handshake between Processing and the Twitter API. Then, you need to create a “Twitter Stream Factory” and a Listener. They goe on and get the tweets from the stream and wait to see how you will se them. To clarify your use of the stream, you have to create queries. You could look for tweets coming from a specific username, datas, etc. It is recommended to have only one query by Twitter Factory.

This means that for Interior Weather, I had to build 4 different Twitter Factories (meaning 4 developer accounts, 4 times the oauth keys details and 4 times the access token details) to have 4 different queries live.

Triggering Storm Sounds

I needed to find way to have Processing triggering sounds for every different tweet query. I started by using the OscP5 Library. It allows Processing to send and receive MIDI message to and from another software. I connected Processing to Ableton Live.

Every time there was a tweet coming from a query, Processing was sending an osc message to Ableton and an .mp3 clip was triggered. There were limitations when it was time to play more then one .mp3 at the same time. I did not find a way to send Processing message from Ableton when the track was done or when the track was already playing. This means that every tweet would play the song from the start causing cuts and bugs. Per example, if there were more than one tweet about anger within 5 seconds, the track would start 5 times instead of playing till the end before it being triggered by another tweet. After 2 days of trying, I discovered I could do it in a better and simpler way using the Minim Library.

The Minim Library is pretty simple. You do not need to connect Processing to an external sound software to play tracks, it transform Processing into a audio player itself. Which is exactly what I needed as I was using .mp3 and was not doing anything live involving visuals effects.

The code is pretty simple. You load the .mp3 in an array the same way you would do for pictures:

import ddf.minim.*;

Minim minim;

AudioPlayer[] track = new AudioPlayer [9];

minim = new Minim(this);

for (int i = 0; i < track.length; i++) {

track [i] = minim.loadFile( i + “.mp3″);

}

Then, when you want to play the tracks you use the .play(); function. Click here is the list of the Minim functions of the AudioPlayer. What is tricky with Minim is that each time Processing plays an .mp3, it expects to play it from where it played last time. You have to .rewind(); the track in the loop so it start from the beginning the next time. My tracks are very short and will be triggered a lot of time in draw, depending on the incoming tweets. So I had to come up with a solution so Processing would know:

- when a track has to be triggered;

- when a track is already playing

- when it has to go back to the beginning of the track.

if (aloneOn == true) {

ellipse (width/2, height/2, 30, 30);

port.write(‘H’);

if (track[7].position() > track[7].length()-1000) {

aloneOn = false;

track[7].rewind();

}

else if (track[7].isPlaying() ==false) {

track[7].play();

}

}

The Physical Interface

The idea with this project was to create an object:

- you would have to put on your head, as to being forced to assume and experience those negative ideas we make so much effort to push away and deny;

- that would be intimate, that would make you feel that the storm created by the tweets was yours, as if it would be happening in your own head;

The key work: Prototyping

The best thing I learned during this class, is the idea of prototyping. It took me a long time to accept:

- my lacks of certain skills: 3D design, structure design, etc.;

- the time I could allow to the project was limited, that I would never have the time to build the perfect object, as there were so many things to learn to do so;

- that my budget was limited. I had to come to terms with the idea that I would have to compromise a lot. I cannot afford to invest a lot of money in all my project. so I needed to accept they would look unfinished. This project is not a project, it is a prototype of a project.

For all those reason, I decided to let it go. To stop forcing me into the perfection path, as it would lead towards disappointment. I needed to accept the process was more important that the project itself.

The Challenges

One of the most challenging things was to deal with a live stream of data. How do you build a coherent experience without knowing what would come up from Twitter? How to build an experience that would be ready for every eventualities. I still have a lot of work to do regarding the sounds. The .mp3 are of 15 seconds. I might have to change the length so it works better.

Another challenge was the delays. There is a short delay in between Twitter and Processing. It takes half a second for Processing to trigger the .mp3 when a tweet is received. There is also a delay in between Processing and Arduino. It takes a little time for Arduino to receive the byte and read it. There is also a delay for the DC motors to start. This means that the motors do not start exactly at the same time as the .mp3 they are supposed to be aligned with.

The biggest challenge was to build the object itself. For the reasons mentioned above, I did not managed to create was I wanted in first place. I decided to build something bigger than a helmet. Something that would look like a cloud. This meant that :

The limitations led me to do stuff I had not envisioned. I learned how to soder with galvanized wire, I spent a lot of time trying to build the object in Rhino. So I discovered the software.

- it would have to be hung up somewhere, that it would not fit every body, as it was too complicated to deal with the height at this point;

- it would not be sound proof. That you would heard the sounds from the room while experiencing the storm;

- I did not have budget to by more LEDs and more fans. So the experience is limited.

The limitations led me to do stuff I had not envisioned. I learned how to soder with galvanized wire, I spent a lot of time trying to build the object in Rhino. So I discovered the software.

Connecting Processing to Twitter Live Stream and Processing Serial communication to Arduino:

/// TWITTER //////////////////////////////////////////////////////////////////////////////////////

////// ALONE – Connecting to the Twitter API

static String OAuthConsumerKeyA = “NDgkkBotZeXcoAPMOsemA”;

static String OAuthConsumerSecretA = “XusVVwearPNjGwhYpYkgnlAM4WRSwu2EnGVHjQdSaOw”;

static String AccessTokenA = “102763568-uuNmUvzeOBFNyDaX1GA1XyW5TTiUekQKhLRYjuhV”;

static String AccessTokenSecretA = “TUpkVOftKcLAjsFVnzkjJV9bB30bRSbixgOF983appk”;

////// SAD – Connecting to the Twitter API

static String OAuthConsumerKeyB = “Z1PMOJj7f3jP0LCvl32ng”;

static String OAuthConsumerSecretB = “WRehjiit2gEk1TOb2kDvKbG8Wdwg2Qg0IoDYCZof7ek”;

static String AccessTokenB = “981269682-0kVLQO6tmaZKC8UOm49spp4K1u3e9q4vptKMcPMw”;

static String AccessTokenSecretB = “d1VLGSeKALv2dz61xx9eLdhpCqTZGuCDE1ms311w”;

////// ANGRY – Connecting to the Twitter API

static String OAuthConsumerKeyC = “”;

static String OAuthConsumerSecretC = “”;

static String AccessTokenC = “”;

static String AccessTokenSecretC = “”;

////// AFRAID – Connecting to the Twitter API

static String OAuthConsumerKeyD = “”;

static String OAuthConsumerSecretD = “”;

static String AccessTokenD = “”;

static String AccessTokenSecretD = “”;

////// Declaring the Twitter Factories

TwitterStream twitterA = new TwitterStreamFactory().getInstance();

TwitterStream twitterB = new TwitterStreamFactory().getInstance();

TwitterStream twitterC = new TwitterStreamFactory().getInstance();

TwitterStream twitterD = new TwitterStreamFactory().getInstance();

////// Find I FEEL ALONE in the live Twitter live stream

String keywordsA[] = { “I feel alone”};

String alone = “”;

ArrayList allTweetsAlone = new ArrayList();

boolean aloneOn;

////// Find I AM SAD in the live Twitter live stream

String keywordsB[] = {“I am sad”};

String sad = “”;

ArrayList allTweetsSad = new ArrayList();

boolean sadOn;

////// Find I AM ANGRY in the live Twitter live stream

String keywordsC[] = {“I am angry”};

String angry = “”;

ArrayList allTweetsAngry = new ArrayList();

boolean angryOn;

////// Find I AM HAPPY in the live Twitter live stream

String keywordsD[] = {“I am afraid of”};

String afraid = “”;

ArrayList allTweetsAfraid = new ArrayList();

boolean afraidOn;

/// ARDUINO //////////////////////////////////////////////////////////////////////////////////////

////// Declaring for the Serial communication

import processing.serial.*;

Serial port;

/// MINIM ////////////////////////////////////////////////////////////////////////////////////////

import ddf.minim.*;

Minim minim;

AudioPlayer[] track = new AudioPlayer [9];

/// SETUP ////////////////////////////////////////////////////////////////////////////////////////

void setup () {

size(500, 500);

///// Communicating with Twitter

connectTwitter();

twitterA.addListener(listenerA);

twitterA.filter(new FilterQuery().track(keywordsA));

twitterB.addListener(listenerB);

twitterB.filter(new FilterQuery().track(keywordsB));

twitterC.addListener(listenerC);

twitterC.filter(new FilterQuery().track(keywordsC));

twitterD.addListener(listenerD);

twitterD.filter(new FilterQuery().track(keywordsD));

////// Serial Communicatione

println(Serial.list());

port = new Serial(this, Serial.list()[8], 115200);

println(Serial.list());

////// MINIM

minim = new Minim(this);

for (int i = 0; i < track.length; i++) {

track [i] = minim.loadFile( i + “.mp3″);

}

}

/// DRAW /////////////////////////////////////////////////////////////////////////////////////////

void draw () {

background(0);

// ALONE // H

if (aloneOn == true) {

ellipse (width/2, height/2, 30, 30);

port.write(‘H’);

if (track[7].position() > track[7].length()-1000) {

aloneOn = false;

track[7].rewind();

}

else if (track[7].isPlaying() ==false) {

track[7].play();

port.write(‘H’);

}

}

// SAD // L

if (sadOn == true) {

ellipse (30, 250, 30, 30);

port.write(‘L’);

if (track[6].position() > track[6].length()-1000) {

sadOn = false;

track[6].rewind();

}

else if (track[6].isPlaying() ==false) {

track[6].play();

port.write(‘L’);

}

}

// ANGRY // M

if (angryOn == true) {

ellipse (250, 30, 30, 30);

port.write(‘M’);

if (track[1].position() > track[1].length()-1000) {

angryOn = false;

track[1].rewind();

}

else if (track[1].isPlaying() ==false) {

track[1].play();

port.write(‘M’);

}

}

// AFRAID // N

if (afraidOn == true) {

ellipse (420, 250, 30, 30);

port.write(‘N’);

if (track[8].position() > track[8].length()-500) {

afraidOn = false;

track[8].rewind();

}

else if (track[8].isPlaying() ==false) {

track[8].play();

port.write(‘N’);

}

}

if(aloneOn == false && sadOn == false && angryOn == false && afraidOn == false) {

track[5].play();

if(track[5].position() > track[5].length()-500) {

track[5].rewind();

}

}

}

////////////////////////////////////////////////////////////////////////////////////////////////////

void stop () {

track[9].close();

minim.stop();

///////// This listens for new tweet with ALONE ///////////////////////////////////////////////////

StatusListener listenerA = new StatusListener() {

public void onStatus(Status status) {

alone = status.getText();

allTweetsAlone.add(alone);

for (int i = 0; i < allTweetsAlone.size(); i++) {

String thisTweet = (String) allTweetsAlone.get(i);

println (“stringAlone: ” + thisTweet);

}

aloneOn = true;

println (“stringAlonesize: ” + allTweetsAlone.size());

};

public void onDeletionNotice(StatusDeletionNotice statusDeletionNotice) {

System.out.println(“Got a status deletion notice id:” + statusDeletionNotice.getStatusId());

}

public void onTrackLimitationNotice(int numberOfLimitedStatuses) {

System.out.println(“Got track limitation notice:” + numberOfLimitedStatuses);

}

public void onScrubGeo(long userId, long upToStatusId) {

System.out.println(“Got scrub_geo event userId:” + userId + ” upToStatusId:” + upToStatusId);

}

public void onException(Exception ex) {

ex.printStackTrace();

}

};

///////// This listens for new tweet with SAD ///////////////////////////////////////////////////

StatusListener listenerB = new StatusListener() {

public void onStatus(Status status) {

sad = status.getText();

allTweetsSad.add(sad);

for (int i = 0; i < allTweetsSad.size(); i++) {

String thisTweet = (String) allTweetsSad.get(i);

println (“stringSAD: ” + thisTweet);

}

sadOn = true;

println (“stringSADsize: ” + allTweetsSad.size());

};

public void onDeletionNotice(StatusDeletionNotice statusDeletionNotice) {

System.out.println(“Got a status deletion notice id:” + statusDeletionNotice.getStatusId());

}

public void onTrackLimitationNotice(int numberOfLimitedStatuses) {

System.out.println(“Got track limitation notice:” + numberOfLimitedStatuses);

}

public void onScrubGeo(long userId, long upToStatusId) {

System.out.println(“Got scrub_geo event userId:” + userId + ” upToStatusId:” + upToStatusId);

}

public void onException(Exception ex) {

ex.printStackTrace();

}

};

///////// This listens for new tweet with SAD ///////////////////////////////////////////////////

StatusListener listenerC = new StatusListener() {

public void onStatus(Status status) {

angry = status.getText();

allTweetsAngry.add(angry);

for (int i = 0; i < allTweetsAngry.size(); i++) {

String thisTweet = (String) allTweetsAngry.get(i);

println (“stringANGRY: ” + thisTweet);

}

angryOn = true;

println (“stringANGRYsize: ” + allTweetsAngry.size());

};

public void onDeletionNotice(StatusDeletionNotice statusDeletionNotice) {

System.out.println(“Got a status deletion notice id:” + statusDeletionNotice.getStatusId());

}

public void onTrackLimitationNotice(int numberOfLimitedStatuses) {

System.out.println(“Got track limitation notice:” + numberOfLimitedStatuses);

}

public void onScrubGeo(long userId, long upToStatusId) {

System.out.println(“Got scrub_geo event userId:” + userId + ” upToStatusId:” + upToStatusId);

}

public void onException(Exception ex) {

ex.printStackTrace();

}

};

///////// This listens for new tweet with HAPPY ///////////////////////////////////////////////////

StatusListener listenerD = new StatusListener() {

public void onStatus(Status status) {

afraid = status.getText();

allTweetsAfraid.add(afraid);

for (int i = 0; i < allTweetsAfraid.size(); i++) {

String thisTweet = (String) allTweetsAfraid.get(i);

println (“stringAfraid: ” + thisTweet);

}

afraidOn = true;

println (“stringAfraidsize: ” + allTweetsAfraid.size());

};

public void onDeletionNotice(StatusDeletionNotice statusDeletionNotice) {

System.out.println(“Got a status deletion notice id:” + statusDeletionNotice.getStatusId());

}

public void onTrackLimitationNotice(int numberOfLimitedStatuses) {

System.out.println(“Got track limitation notice:” + numberOfLimitedStatuses);

}

public void onScrubGeo(long userId, long upToStatusId) {

System.out.println(“Got scrub_geo event userId:” + userId + ” upToStatusId:” + upToStatusId);

}

public void onException(Exception ex) {

ex.printStackTrace();

}

};

////// Initial connection

void connectTwitter() {

// Connection for ALONE

twitterA.setOAuthConsumer(OAuthConsumerKeyA, OAuthConsumerSecretA);

AccessToken accessTokenA = loadAccessTokenA();

twitterA.setOAuthAccessToken(accessTokenA);

// Connection for SAD

twitterB.setOAuthConsumer(OAuthConsumerKeyB, OAuthConsumerSecretB);

AccessToken accessTokenB = loadAccessTokenB();

twitterB.setOAuthAccessToken(accessTokenB);

// Connection for ANGRY

twitterC.setOAuthConsumer(OAuthConsumerKeyC, OAuthConsumerSecretC);

AccessToken accessTokenC = loadAccessTokenC();

twitterC.setOAuthAccessToken(accessTokenC);

// Connection for Happy

twitterD.setOAuthConsumer(OAuthConsumerKeyD, OAuthConsumerSecretD);

AccessToken accessTokenD = loadAccessTokenD();

twitterD.setOAuthAccessToken(accessTokenD);

}

/////// Loading up the access token

// Access token for ALONE

private static AccessToken loadAccessTokenA() {

return new AccessToken(AccessTokenA, AccessTokenSecretA);

}

// Access token for Sad

private static AccessToken loadAccessTokenB() {

return new AccessToken(AccessTokenB, AccessTokenSecretB);

}

// Access token for ANGRY

private static AccessToken loadAccessTokenC() {

return new AccessToken(AccessTokenC, AccessTokenSecretC);

}

// Access token for HAPPY

private static AccessToken loadAccessTokenD() {

return new AccessToken(AccessTokenD, AccessTokenSecretD);

}

Here is the code to have the Arduino receiving the serial communication from Processing and activating the devices:

//MotorA

const int enablePin = 7;

const int motor1Pin = 6;

const int motor2Pin = 5;

//MotorB

const int enableBPin = 4;

const int motorB1Pin = 3;

const int motorB2Pin = 2;

const int ledPinA = 8;

const int ledPinB = 9;

const int ledPinC = 10;

const int ledPinD = 11;

const int ledPinF = 12;

int incomingByte;

void setup() {

Serial.begin(9600);

pinMode(motor1Pin, OUTPUT);

pinMode(motor2Pin, OUTPUT);

pinMode(enablePin, OUTPUT);

pinMode(enableBPin, OUTPUT);

pinMode(motorB1Pin,OUTPUT);

pinMode(motorB2Pin, OUTPUT);

pinMode(ledPinA, OUTPUT);

pinMode(ledPinB, OUTPUT);

pinMode(ledPinC, OUTPUT);

pinMode(ledPinD, OUTPUT);

pinMode(ledPinF, OUTPUT);

//digitalWrite(enablePin,HIGH);

//digitalWrite(enableBPin,HIGH);

}

void loop(){

if (Serial.available() > 0) {

incomingByte = Serial.read();

if (incomingByte == ‘H’) {

digitalWrite(enablePin,HIGH);

digitalWrite(motor1Pin, HIGH);

digitalWrite(motor2Pin, LOW);

digitalWrite(enableBPin,HIGH);

digitalWrite(motorB1Pin, HIGH);

digitalWrite(motorB2Pin, LOW);

digitalWrite(ledPinA, LOW);

digitalWrite(ledPinB, LOW);

digitalWrite(ledPinC, LOW);

digitalWrite(ledPinD, LOW);

digitalWrite(ledPinF, LOW);

Serial.println(“ALONE”);

}

if (incomingByte == ‘L’) {

digitalWrite(enablePin,LOW);

digitalWrite(motor1Pin, LOW);

digitalWrite(motor2Pin, LOW);

digitalWrite(enableBPin,LOW);

digitalWrite(motorB1Pin, LOW);

digitalWrite(motorB2Pin, LOW);

digitalWrite(ledPinA, LOW);

digitalWrite(ledPinB, LOW);

digitalWrite(ledPinC, LOW);

digitalWrite(ledPinD, LOW);

digitalWrite(ledPinF, LOW);

Serial.println(“SAD”);

}

if (incomingByte == ‘M’) {

digitalWrite(enablePin,LOW);

digitalWrite(motor1Pin, LOW);

digitalWrite(motor2Pin, LOW);

digitalWrite(enableBPin,LOW);

digitalWrite(motorB1Pin, LOW);

digitalWrite(motorB2Pin, LOW);

digitalWrite(ledPinA, LOW);

digitalWrite(ledPinB, LOW);

digitalWrite(ledPinC, LOW);

digitalWrite(ledPinD, LOW);

digitalWrite(ledPinF, LOW);

Serial.println(“ANGRY”);

}

if (incomingByte == ‘N’) {

digitalWrite(enablePin,LOW);

digitalWrite(motor1Pin, LOW);

digitalWrite(motor2Pin, LOW);

digitalWrite(enableBPin,LOW);

digitalWrite(motorB1Pin, LOW);

digitalWrite(motorB2Pin, LOW);

digitalWrite(ledPinA, HIGH);

digitalWrite(ledPinB, HIGH);

digitalWrite(ledPinC, HIGH);

digitalWrite(ledPinD, HIGH);

digitalWrite(ledPinF, HIGH);

Serial.println(“AFRAID”);

}

}

}